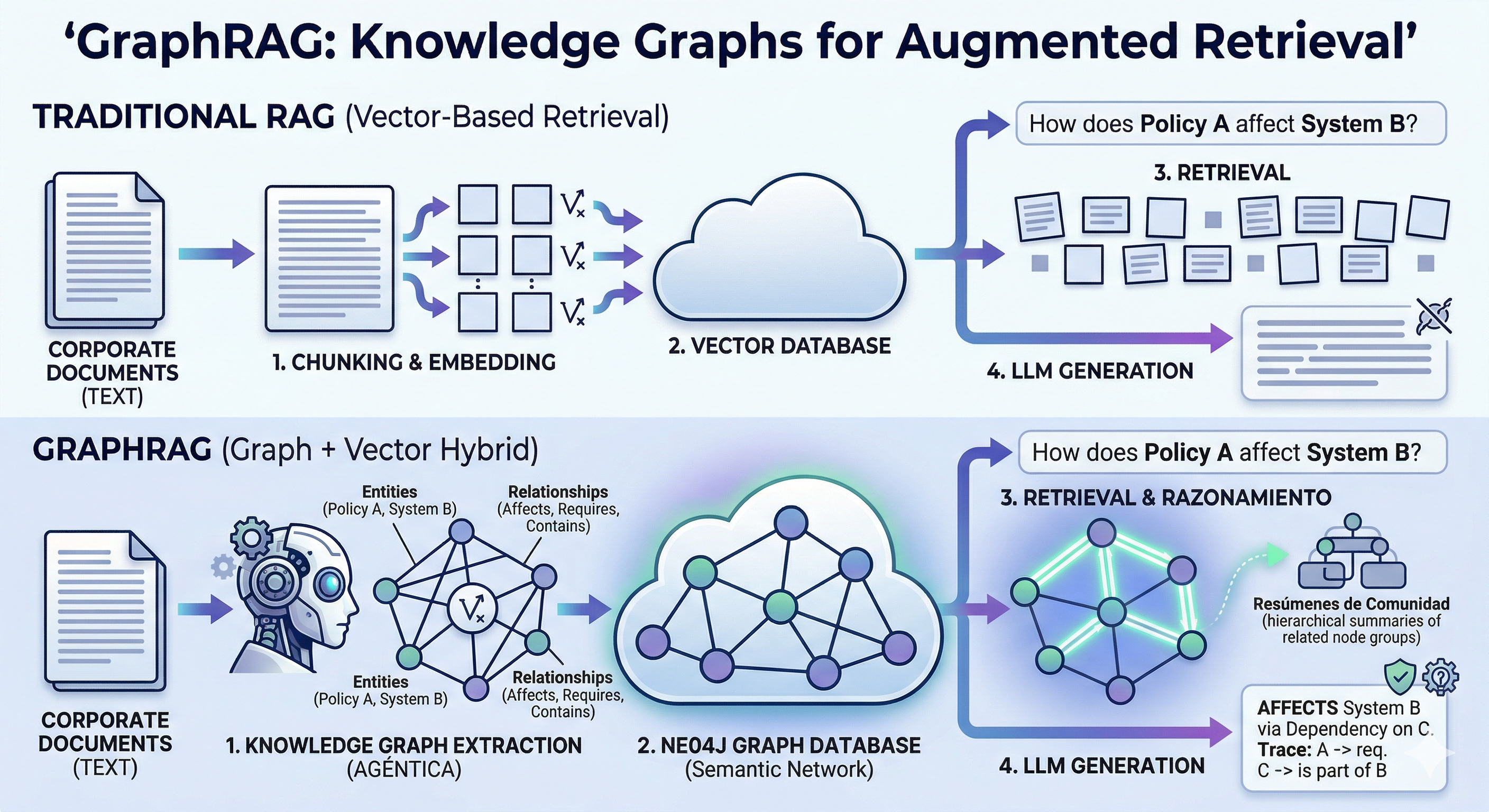

Over the past two years, Retrieval-Augmented Generation (RAG) has been the cornerstone of AI implementation in enterprises. It allowed us to connect Large Language Models (LLMs) with private data, overcoming the barrier of hallucinations. However, as deployments scaled, we hit a wall: the lack of global context.

Today, in 2026, the logical evolution has arrived. Welcome to the era of GraphRAG.

The Problem with Traditional RAG: Semantic “Blindness”

Conventional RAG relies on vector search. It breaks documents into fragments (chunks) and searches for those that are mathematically “similar” to a query. It works well for specific, localized questions, but fails miserably when we need:

- Connecting distant dots: If the answer requires linking a piece of data from page 5 with a consequence on page 500.

- Thematic summaries: “Explain the risks of this security policy” requires understanding the whole, not just retrieving fragments.

- Understanding hierarchies: Vectors do not understand that “Component A” belongs to “System B”.

What is GraphRAG and how does it change the game?

The integration of Knowledge Graphs allows AI not only to “read” data but to build a mental map of it. Instead of a flat database, GraphRAG structures information into Nodes (entities) and Edges (relationships).

The State-of-the-Art Technical Process

To achieve this architecture, the data pipeline has evolved into three critical phases:

- Entity and Relationship Extraction: The LLM processes the data corpus to identify key concepts (people, systems, regulations, dates) and how they interact with each other.

- Community Detection: Using graph algorithms, the system automatically groups related entities into hierarchical “communities.”

- Predictive Summarization: Summaries are generated for each of these communities. When a user asks a question, the system doesn’t just look for fragments; it consults the summaries of these structures to provide an answer with global context.

Strategic Business Benefits

Implementing GraphRAG is not just an incremental improvement; it is a qualitative leap in the purpose of AI within an organization:

- Auditability and Traceability: Since there is an explicit relationship in the graph, it is much easier to verify where an AI’s conclusion originated.

- Non-Linear Reasoning: It allows autonomous agents to navigate complex processes, such as compliance audits or system architecture design, by understanding dependencies.

- Massive Noise Reduction: By understanding the structure of information, the system avoids retrieving irrelevant fragments that only confuse the language model.

Deep Diving into GraphRAG: Technical Architecture and the Audit Use Case

The transition to GraphRAG is not simply changing a vector database for a graph one; it is a shift in the way agents “navigate” an organization’s logic. Below, we break down the necessary infrastructure and how it is applied in the real world of auditing.

1. The Implementation Architecture: The Modern “Stack”

To build a robust GraphRAG system in 2026, the combination of Neo4j (as a graph engine and vector store) and LangGraph (for agent orchestration) has consolidated as the industry standard.

The Agentic Ingestion Pipeline

It’s not about uploading documents, but rather agents “understanding” the structure while indexing it:

- Extraction Agent: Uses an LLM to identify “Subject-Predicate-Object” triads (e.g., “Security Policy -> mandates -> MFA Authentication”).

- Normalization Agent: Responsible for cleaning and merging duplicate entities to prevent the graph from fragmenting.

- Hybrid Indexing: Nodes are stored with their vector embeddings in Neo4j, allowing for semantic similarity searches combined with graph traversals.

Orchestration with LangGraph

Unlike linear chains, LangGraph allows for reasoning cycles. An agent can:

- Query the graph to find a policy.

- Identify that the policy depends on another regulation.

- Jump to that related regulation to verify consistency before responding.

2. Use Case: Automated Corporate Policy Audit

Imagine an organization that manages complex infrastructures, such as Kubernetes clusters, and must comply with strict security regulations (Zero Trust, Egress traffic filtering, etc.).

The Problem

Traditional audits fail because the evidence (logs, network configurations like Cilium) are disconnected from the policies written in PDF documents.

The GraphRAG Solution

By integrating both into a Knowledge Graph, the audit system works like this:

- Dependency Mapping: The graph connects a “Security Incident” (e.g., a brute-force attack detected by the infrastructure provider) with the corresponding “Mitigation Policy” and the executed “Remediation Actions” (such as deleting a compromised namespace).

- Real-Time Verification: An Auditor Agent can ask the graph: “What is our stance on unauthorized egress traffic?”. The system will not only retrieve the text of the “Deny-All” policy, but it can also verify if the network rules (like Cilium eBPF policies) are actually applied in the cluster nodes.

- Explainable Report Generation: Instead of a list of alerts, the AI generates a report tracing the reasoning: “A vulnerability was detected in a legacy environment; the system applied a Zero Trust policy; the result was validated using observability tools (Hubble), confirming the eradication of the threat”.

Business Impact

This approach reduces preparation time for audits from weeks to minutes. Furthermore, it enables Preventative Auditing: the AI can alert if a change in a service configuration breaks the compliance hierarchy established in the graph before an incident occurs.

This integration of GraphRAG + Multi-agents is what finally allows moving from an AI that “answers questions” to an AI that “governs processes” with full transparency and technical rigor.